TOKYO--(BUSINESS WIRE)--NRI SecureTechnologies, Ltd. (Head Office: Chiyoda Ward, Tokyo; President: Shunichi Tatewaki, "NRI Secure"), today launched a new Service named "AI Blue Team,” a Service which provides Security Monitoring for Systems using Generative AI.

Utilizing AI Blue Team in conjunction with AI Red Team, a Security Assessment Service released in December 2023, identifies existing system-specific vulnerabilities, enabling comprehensive and continuous monitoring of security measures on systems utilizing Large Language Models (LLM). [*]1 [*]2

Risks Introduced by Generative AI

In recent years, AI use has been increasing in various fields. As more platforms implement AI in innovative ways, new vulnerabilities specific to AI developments are emerging.

With the increase in use of Generative AI and Large Language Models (LLMs) in emerging services, especially those focused on operational efficiency, special considerations and new security measures must be taken. LLMs face a plethora of Threats such as Prompt Injection, Prompt Leaking, Hallucination, Sensitive Information Disclosure, Bias Risk, and Inappropriate Content Output [*]3 [*]4 [*]5 [*]6

Overview and Features of AI Blue Team

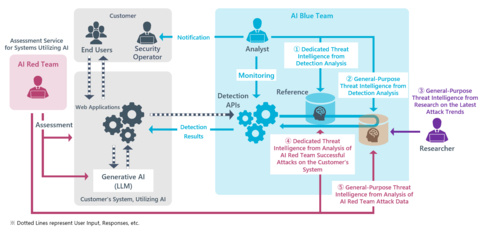

NRI Secure places utmost importance on accurate detection of Vulnerabilities and associated Risks, and on the continuous accumulation of Threat Intelligence, information collected and analyzed about Security Threats for application in monitoring operations. As more Threat Intelligence is gathered, analyzed, and processed, AI Blue Team can respond to new attack techniques and vulnerabilities discovered with increasing accuracy. By pairing the AI Blue Team service with the AI Red Team service, specialized Threat Intelligence from systems using Generative AI can be gathered. The purpose of this Service is to support LLM-associated Risk Management through continuous monitoring, so that companies and organizations can focus on improving operational efficiency and business transformation using LLM securely.

Before introducing AI Blue Team to a new organization, an AI Red Team Service Assessment is performed first. By applying intelligence gathered from the Assessment Results of the AI Red Team Service on a customer’s system to the AI Blue Team Service, effective countermeasures can be taken against Threats that are difficult to handle with other AI defense solutions. The two main features of this Service are as follows.

1. Avoidance of Widespread and Novel AI Risks by Continuous Monitoring of Generative AI Systems

Information on Input/Output between Generative AI and the system it is built upon is linked to the detection APIs provided by the AI Blue Team Service. When harmful Input/Output is detected, appropriate parties within the organization utilizing the AI Blue Team Service are notified. [*]7

In addition to monitoring and response to Threats specific to LLMs, NRI Secure analysts carefully study attack trends from Assessment Results to accumulate Threat Intelligence, to in turn respond more effectively to emerging threats and attack methods and provide continuous updates and enhancements to the AI Blue Team Service. The monitoring dashboard that an NRI Secure analyst reviews and monitors can also be accessed by the customer using the AI Blue Team Service, so it is possible to directly confirm detection status by both parties in real-time.

2. Defense against System-specific Vulnerabilities Detected by AI Red Team and Enhancement of Protection

In the development field of systems utilizing generative AI, system-specific vulnerabilities can be introduced depending on the ways AI is utilized and the levels of authority delegation. These types of vulnerabilities cannot be addressed by general defense solutions and require individualized countermeasures.

In response to the specific AI system vulnerabilities that are detected by the AI Red Team Service’s Security Assessments, the AI Blue Team Service accumulates Threat Intelligence, both specific to a customer, as well as general-purpose Threat Intelligence, to customize and implement the most effective security measures. This customized approach is expected to further strengthen the protection level of a customer’s entire system by protecting that system from specialized Threats and attacks, and from exploitation of inherent and latent vulnerabilities.

For more information about this service, please visit https://www.nri-secure.com/security-solutions/ai-blue-team-service

NRI Secure will continue to provide a variety of products and services to support companies and organizations' information security measures, to contribute to the realization of a safe and secure information system environment and society.

1 AI Red Team: Security Assessment service for novel systems and services that use Generative AI. See the following website for more information. https://www.nri-secure.com/service/assessment/ai-red-team

2 Large Language Model (LLM): A natural language processing model trained on large amounts of text data.

3 Prompt Injection: An attempt by an attacker to manipulate input prompts to obtain unexpected or inappropriate information from the model.

4 Prompt Leaking: An attempt by an attacker to manipulate input prompts to steal directives or sensitive information originally set in the LLM.

5 Hallucination: The phenomenon in which AI generates information that is not factual.

6 Bias Risk: The phenomenon in which bias in training data or algorithm design causes biased decisions or predictions.

7 Application Programming Interface (API): A technology that makes certain features of a program available to other programs.